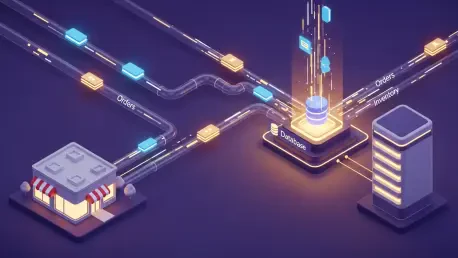

Maintaining a competitive edge in today’s digital marketplace requires more than just a collection of expensive software subscriptions; it demands a unified architectural strategy that bridges the gap between customer intent and back-office execution. In the high-stakes world of enterprise retail, having a best-in-class tech stack is only half the battle. Organizations may possess a logistics-heavy Enterprise Resource Planning system and a world-class Customer Relationship Management tool, but without seamless ecommerce data integration, these systems effectively speak different languages. This lack of synchronization creates what is known as the fragmentation tax, a misalignment that costs large organizations an average of twelve point nine million dollars annually.

To remain competitive, commerce teams must move beyond siloed operations. This guide provides a comprehensive seven-step roadmap to integrating operational and analytical data layers, ensuring that every system, from the storefront to the back-office finance tools, works from a single, unified version of the truth. Integration is no longer a luxury but a fundamental requirement for any business attempting to navigate the complexities of modern consumer expectations and supply chain volatility. By aligning these disparate data points, a business can transform from a reactive entity into a proactive, data-driven powerhouse.

Overcoming the Fragmentation Tax in Modern Digital Commerce

The fragmentation tax manifests as a series of hidden costs that slowly erode the profitability of even the most successful brands. When inventory data is stuck in a warehouse management system and does not update the digital storefront in real time, the result is often overselling, leading to cancelled orders and frustrated customers. Similarly, when customer data remains locked in a support platform without reaching the marketing automation engine, the brand loses the ability to provide personalized experiences that drive repeat purchases. These inefficiencies aggregate over time, creating a massive operational burden that slows down innovation and prevents the business from scaling effectively.

In the current landscape, the ability to move data with agility has become the primary differentiator between market leaders and those struggling with operational drag. Sophisticated commerce operations understand that data is a fluid asset that must flow unhindered across the organization. By eliminating the manual entry points and brittle spreadsheets that traditionally acted as the connective tissue between departments, companies can reclaim thousands of man-hours. This shift allows the workforce to focus on high-value strategic initiatives rather than basic data reconciliation, effectively turning the integration project into a significant driver of return on investment.

Why Integrated Data Architecture is the Prerequisite for Scale

In the past, data integration was often viewed as a nice-to-have technical project relegated to the IT department. Today, it is the backbone of retail survival and a foundational element for any organization looking to leverage advanced automation. As organizations shift toward API-first architectures, the ability to move data in real-time between disparate applications has become essential. This connectivity allows for unified commerce, where inventory turnover is higher and customer lifetime value significantly increases because the business functions as one cohesive unit rather than a collection of independent departments.

The Shift Toward API-First Ecosystems

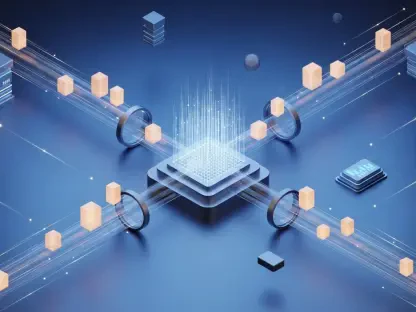

The modern commerce stack is no longer a monolithic block; it is a complex web of interconnected services designed to handle specific business functions with precision. With over eighty percent of organizations adopting API-first strategies, the focus has shifted from simple data storage to fluid data movement. This architecture allows a business to swap out individual components, such as a shipping provider or a tax calculation engine, without disrupting the entire ecosystem. The result is a more resilient and flexible infrastructure that can adapt to changing market conditions or new consumer trends with minimal friction.

Moreover, the API-first approach enables a deeper level of customization and extensibility that was previously impossible with rigid, legacy systems. Developers can build custom applications that tap into the core commerce engine, creating unique brand experiences that set the company apart from competitors. This modularity ensures that the technology stack remains modern and capable of supporting the latest innovations in payment processing, augmented reality, or voice commerce. By embracing this level of connectivity, an organization ensures its technical foundation is ready for whatever comes next in the digital retail space.

The High Cost of Disconnected Systems

Fragmented data is not just an IT headache; it is a formidable barrier to innovation that can derail the most ambitious corporate strategies. Research suggests that sixty percent of artificial intelligence projects will be abandoned through the current year simply because the underlying data is not AI-ready. When a warehouse management system, an ERP, and a digital storefront are out of sync, the business loses the ability to make fast, automated decisions. This leads to critical errors such as shipping delays, incorrect pricing, and eroded customer trust, all of which are difficult to repair once the damage is done.

The financial implications of these disconnected systems extend beyond lost sales to include increased operational expenses and reduced employee morale. Staff members often find themselves performing repetitive tasks to bridge the gaps between systems, leading to a higher rate of human error and burnout. In contrast, a well-integrated system provides a clear, real-time view of the business, enabling executives to make informed decisions based on accurate data rather than gut feeling. Without this level of transparency, the organization is essentially flying blind in a highly competitive and volatile market.

A 7-Step Blueprint for Successful Ecommerce Integration

Success in data integration requires a methodical approach that balances technical precision with strategic business goals. This seven-step blueprint is designed to guide an organization through the complexities of connecting various platforms while minimizing risk and maximizing value. By following this structured path, a commerce team can ensure that the integration project stays on track and delivers the intended benefits to the entire enterprise. Each step builds upon the previous one, creating a solid framework that supports long-term growth and operational excellence.

Step 1: Define Your Priorities and Critical Path

Not all data flows are created equal, and attempting to integrate everything at once is a recipe for project failure. The first week of any integration project should be dedicated to identifying which data movements protect revenue and which are merely secondary. This prioritization ensures that the most critical functions are addressed first, providing immediate value to the organization. By focusing on the high-impact areas, the team can demonstrate quick wins that build momentum and secure continued support from stakeholders across the company.

Assessing Financial Exposure and Operational Dependency

The focus must first remain on Tier 1 flows, which typically include inventory levels, order processing, and returns management. If these specific flows fail, the business essentially stops functioning, leading to immediate financial loss and reputational damage. For business-to-business entities, price accuracy is often the top priority to ensure contract compliance, while omnichannel retailers must focus on hyper-local inventory syncing to support buy-online-pick-up-in-store initiatives. Understanding these dependencies allows the technical team to allocate resources where they will have the greatest impact on the bottom line.

Eliminating the Manual “CSV Handoff”

A crucial part of this initial step involves identifying any process that currently requires a human to upload or download a spreadsheet. These manual touchpoints are the highest points of failure within an organization and should be the first candidates for complete automation. Human intervention in data movement inevitably leads to delays, typos, and inconsistent records that can take weeks to rectify. By replacing these manual handoffs with automated API calls, the business can significantly increase data accuracy and free up employees to focus on more strategic tasks.

Step 2: Establish an Authoritative Source of Truth (SoT)

In a complex technology stack, every piece of data must have a designated home system that holds the final word on its accuracy. Without a clearly defined source of truth, different departments may end up working with conflicting information, leading to confusion and operational errors. Establishing this authority ensures that when a discrepancy arises, there is a clear rule for which system should be trusted. This structural clarity is essential for maintaining data integrity as the business grows and adds more applications to its ecosystem.

Assigning Ownership to Core Data Categories

Generally, inventory truth should reside in the warehouse management system, as it is the closest to the physical product. Customer intent and order details are typically best managed within the ecommerce platform, such as Shopify, while product specifications often originate in a dedicated Product Information Management system. Finance and tax data, on the other hand, should usually have their primary home in the ERP. Assigning ownership in this manner prevents systems from trying to overwrite each other, ensuring that updates propagate correctly across the entire stack.

Defining North Star Metrics for Technical Health

Technical teams must establish clear key performance indicators to measure the ongoing health and efficiency of the integration. Common metrics include sync latency, which is the number of seconds it takes for a change in one system to appear in another, and the data integrity rate, which tracks the percentage of records that transfer without errors. By monitoring these metrics, the organization can identify bottlenecks or system failures before they impact the customer experience. These indicators serve as an early warning system, allowing the team to maintain high standards of performance and reliability.

Step 3: Match the Integration Pattern to the Data Pulse

Different types of data require different speeds of movement, and forcing all data through a real-time pipe can lead to system gridlock. Understanding the pulse of various data categories allows the architecture team to choose the most efficient integration pattern for each use case. This strategic approach ensures that mission-critical information moves instantly while high-volume analytical data is processed in a way that does not strain system resources. Balancing these needs is key to maintaining a responsive and stable commerce environment.

Sprinting with Operational Event-Driven Architecture

For data that requires immediate action, such as a customer placing an order or a warehouse updating inventory levels, an event-driven architecture is the most effective choice. Using webhooks allows systems to push information as soon as an event occurs, ensuring that all relevant platforms are updated within seconds. This real-time synchronization is vital for modern commerce, where customers expect instant confirmation of their purchases and accurate availability information. By adopting an event-driven model, the business can provide a seamless and responsive experience that builds consumer confidence.

Strolling with Analytical Batch Processing

In contrast, data used for long-term analysis, such as end-of-day financial reconciliation or monthly sales reporting, does not need to move in real time. For these next-day requirements, batch processing using bulk APIs is often more efficient. This method allows the system to move large volumes of data during off-peak hours, reducing the load on operational systems and ensuring that analytical tools have a complete and consistent dataset to work with. Batch processing is a cost-effective way to handle high-volume data without compromising the performance of the live storefront.

Step 4: Formalize the Data Contract

A successful integration requires all participating systems to agree on a formal data contract that defines exactly what the data means and how it should be formatted. Without these strict rules, systems may misinterpret information, leading to errors in shipping, billing, or reporting. The data contract acts as a universal translator, ensuring that a date or a currency value is understood correctly by every application in the stack. This standardization is the foundation of a reliable and scalable integration strategy that can support complex global operations.

Standardizing Universal Identifiers and Timestamps

One of the most important aspects of the data contract is the use of universal identifiers and a consistent timestamp format. Every system should use the ISO 8601 UTC format for time to prevent confusion across different time zones. Furthermore, mapping a primary reference key, such as a Shopify Order ID, across all systems ensures that every department is looking at the same record. This consistency prevents the creation of ghost records or duplicate entries that can plague organizations with fragmented systems.

Implementing Field Validation and Semantic Rules

To maintain high data quality, the integration should include strict field validation and semantic rules that prevent bad data from moving downstream. For example, a rule could be established that a fulfillment date can never be earlier than the order date, or that a zip code must match the expected format for a specific country. By catching these errors at the point of entry, the business can avoid costly mistakes in logistics and finance. These rules act as a gatekeeper, ensuring that only clean, accurate information is allowed to circulate through the company’s digital veins.

Step 5: Implement Comprehensive Observability

An integration is only as good as the ability to see when it breaks, making observability a critical component of any modern commerce stack. In a distributed system, failures can occur in many places, from network interruptions to API rate limits or data format changes. Comprehensive monitoring allows the technical team to pinpoint exactly where an issue has occurred, often before the end-user is even aware of a problem. This visibility is essential for maintaining the high levels of uptime and reliability that enterprise-scale commerce demands.

Monitoring Webhook Health and Delivery Retries

Technical teams should set up automated alerts to track the health of webhook deliveries, looking for signs of drifting response times or spiking retry rates. An increase in retries often indicates that a downstream system is struggling to keep up with the volume of data or is experiencing internal errors. By monitoring these signals, the team can proactively scale resources or investigate potential bottlenecks. This proactive approach to maintenance ensures that the data flow remains consistent and that the business can continue to operate smoothly during peak traffic periods.

Using Idempotency to Prevent Duplicate Records

In the world of distributed systems, communication errors are inevitable, which can lead to the same request being sent multiple times. To prevent this from causing errors, such as a customer being charged twice or an inventory level being deducted incorrectly, the system should use idempotency keys. These keys ensure that even if a request is received multiple times, the destination system only processes it once. Implementing idempotency is a vital safeguard that protects the integrity of the data and ensures a consistent experience for both the business and its customers.

Step 6: Pressure-Test Failure Modes and the “Happy Path”

Before moving an integration to a live production environment, it must be rigorously tested to ensure it can handle both perfect scenarios and unexpected failures. Testing only the happy path, where everything works as expected, is a common mistake that leads to system crashes during real-world stress. A robust testing strategy involves simulating various failure modes, such as network outages or malformed data packets, to see how the system responds. This process builds confidence in the resilience of the integration and prepares the team to handle any issues that may arise after launch.

Validating Meaning Across the Stack

A happy path test should confirm that a standard transaction flows through the entire system correctly, with all data points landing in the right places. This includes verifying that taxes are calculated correctly, discounts are applied as intended, and custom metadata is preserved across all platforms. The goal is to ensure that the business logic remains consistent as the data moves from the customer’s browser to the warehouse floor and finally to the financial reports. This end-to-end validation is crucial for ensuring that the integrated system supports the core goals of the business.

Breaking the Path to Test Resilience

To truly test the resilience of the integration, the team should intentionally introduce errors into the system, such as failing a webhook or sending a non-existent SKU. These tests confirm that the system can gracefully handle exceptions by triggering alerts and routing the bad data to a dead-letter queue rather than crashing the entire pipeline. This level of fault tolerance is essential for maintaining operational continuity in a complex, multi-system environment. By preparing for the worst-case scenario, the organization can ensure that it remains agile and responsive even when technical challenges occur.

Step 7: Execute a Phased Rollout and Monitor Divergence

The final stage of the integration journey is a controlled transition from the old way of working to the new, integrated ecosystem. A sudden cutover can be risky, especially for high-volume retailers, as any unforeseen issues can immediately impact revenue. A phased rollout allows the team to monitor the system in a live environment while limiting the potential impact of any errors. This cautious approach ensures that the integration is stable and performing as expected before it is fully adopted across the entire organization.

Choosing Between Parallel Runs and Canary Rollouts

Organizations often choose between running the new system in parallel with the old one or performing a canary rollout to a specific region or customer segment. A parallel run allows for a direct comparison of the data produced by both systems, ensuring that the new integration is accurate. A canary rollout, on the other hand, limits risk by exposing only a small portion of the business to the new system. Both strategies provide valuable insights and allow for fine-tuning before the full launch, ensuring a smooth and successful transition for the entire enterprise.

Tracking Real-World Performance Improvements

Once the integrated system is live, the focus should shift to monitoring real-world performance improvements and looking for any signs of data divergence. A successful integration should result in a measurable reduction in order propagation latency and an increase in overall operational efficiency. Over time, these technical improvements will translate into higher customer satisfaction scores and improved conversion rates. By tracking these metrics, the organization can quantify the return on investment for the integration project and identify areas for further optimization in the future.

Summary of the Integration Journey

A successful integration journey is defined by a series of strategic choices that prioritize long-term stability and business value over quick, temporary fixes. By targeting high-revenue-risk flows first, an organization can protect its most important assets while building the foundation for more complex integrations. Assigning a clear source of authority for every data point eliminates confusion and ensures that all departments are working from the same set of facts. This structural clarity is essential for maintaining data integrity as the business scales and evolves in an increasingly complex digital landscape.

Furthermore, matching the speed of data movement to its operational or analytical purpose ensures that system resources are used efficiently. Enforcing strict data contracts and universal identifiers prevents the errors and inconsistencies that often plague fragmented systems. By building in comprehensive observability and testing for both success and failure, the technical team can ensure the system is resilient enough to handle the demands of a modern enterprise. Finally, executing a phased rollout protects live revenue and allows for a controlled transition to a more automated and integrated future.

Applying Integration Logic to Future Commerce Trends

As the retail landscape continues to evolve, the role of ecommerce data integration will expand to include even more sophisticated capabilities. The rise of Reverse ETL is one such trend, where analytical insights from a centralized data warehouse are pushed back into operational systems to drive real-time personalization. This allows a brand to use complex data models to offer specific discounts or product recommendations to customers while they are still on the storefront. Mastering the fundamentals of integration is a prerequisite for adopting these advanced techniques and staying ahead of consumer expectations.

Moreover, the increasing reliance on artificial intelligence for inventory forecasting and customer service makes data-readiness a top priority for forward-thinking organizations. AI models require clean, structured, and timely data to produce accurate results, and a well-integrated system is the only way to provide this at scale. Companies that have successfully implemented the seven steps outlined in this guide will be much better positioned to leverage AI to optimize their supply chains and enhance the customer experience. The investment in integration today is essentially an investment in the company’s ability to compete in the algorithmic commerce era of tomorrow.

Conclusion: Turning Data from a Liability into an Asset

The journey toward full ecommerce data integration transitioned from a technical necessity to a strategic advantage as organizations successfully bridged the gaps between their disparate systems. By following the outlined seven-step roadmap, businesses dismantled the silos that previously hindered growth and fostered a new culture of transparency and efficiency. The elimination of manual handoffs and the establishment of authoritative sources of truth provided a foundation for more reliable operations, allowing teams to respond to market shifts with newfound agility. This shift turned what was once a liability of fragmented information into a powerful asset that fueled innovation and customer loyalty.

As these organizations moved into the post-integration phase, they discovered that the ability to synchronize inventory, orders, and customer data in real time was only the beginning. The high-performance commerce engines they built became the staging ground for advanced initiatives like hyper-personalized marketing and automated supply chain management. By prioritizing observability and resilience, technical leaders ensured that their systems could withstand the pressures of peak demand and technical disruptions. The transition toward a unified data layer ultimately allowed these enterprises to reclaim millions in operational value, proving that a methodical approach to integration was the most effective path toward sustainable success in a digital-first economy.