Zainab Hussain, an e-commerce strategist with deep expertise in customer engagement and operations, brings a wealth of knowledge to the evolving landscape of enterprise AI. Having navigated the shift from manual support workflows to complex, automated ecosystems, she understands the friction points where technology meets human effort. In this discussion, we explore the findings of the 2026 Agentic AI Index, examining the “efficiency paradox” where 72% of agents see gains in speed but only 42% feel a reduction in workload. Zainab provides a roadmap for moving beyond fragmented tools toward a unified, orchestrated customer experience that prioritizes accountability and accuracy.

Many customer service departments currently operate AI as a collection of disconnected tools rather than a unified system. How does this lack of coordination specifically hinder agent productivity, and what are the first steps a company should take to build a more integrated technical architecture?

The primary friction arises when an agent has to act as a manual bridge between systems, which explains why 81% of teams still struggle with disconnected tools. When drafting tools don’t talk to routing systems, agents waste time context-switching and re-verifying information that should already be synced. To fix this, the first step is auditing the tech stack to identify “data silos” where customer intent is lost between the AI summarizing a ticket and the AI suggesting a resolution. Companies must prioritize an integration layer that allows these tools to share a single source of truth, ensuring that only one in five agents—the current minority who see systems working together—becomes the organizational standard.

While a majority of agents report that AI improves efficiency, fewer than half feel it meaningfully reduces their actual workload. Why is this “efficiency paradox” occurring, and how can leadership ensure that automation truly eliminates tasks instead of just shifting the manual effort elsewhere?

The “efficiency paradox” is a reflection of the fact that while AI can generate a response in seconds, the agent is still tethered to the screen to babysit the output. Our data shows that while 72% of representatives feel more efficient, only 42% say their actual effort has decreased because the labor has simply shifted from “writing” to “monitoring and auditing.” Leadership needs to move away from measuring success solely by “Average Handle Time” and start looking at “Touchless Resolution” rates. By empowering AI to handle end-to-end tasks like simple refunds without human intervention, we can stop the cycle where agents feel like they are just proofreaders for a machine.

Roughly half of support representatives must regularly correct AI-generated mistakes, sometimes only discovering errors after a customer complains. What specific supervision protocols should be implemented to catch these “hidden” errors, and how can human-in-the-loop feedback loops be used to improve model accuracy?

It is deeply concerning that 10% of agents only find out about an AI error when an angry customer points it out, as this erodes brand trust instantly. We need to implement real-time confidence scoring where the AI flags its own uncertainty, automatically routing low-confidence drafts to a human supervisor before they ever reach the customer. Establishing a “closed-loop” feedback system is vital; when an agent corrects an AI mistake, that correction should be fed back into the model’s training data to prevent the same error from recurring. Without these protocols, the nearly 50% of agents currently correcting mistakes are just putting out fires rather than building a fireproof system.

As AI handles complex actions like refunds or cancellations, ownership of the final customer outcome often becomes blurred. How should departments define accountability when an automated system fails, and what specialized training do agents need to effectively manage these increasingly autonomous workflows?

With just under 20% of teams reporting unclear ownership, we are seeing a dangerous “accountability gap” where neither the machine nor the human feels responsible for a botched refund. Departments must establish clear “Rules of Engagement” that dictate exactly when an AI agent’s autonomy ends and a human’s authority begins. Training needs to pivot from basic product knowledge to “agentic specialization,” teaching representatives how to debug automated workflows and intervene mid-process. Agents should be viewed as “AI Orchestrators” who are accountable for the final resolution, backed by a system that logs every automated decision for easy auditing.

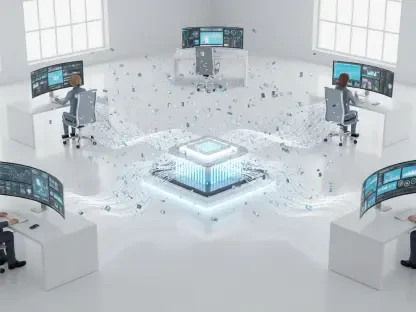

Most teams currently lack an orchestration layer to manage the various AI agents drafting responses or routing tickets. What are the operational risks of scaling without such a layer, and how does a centralized coordination system change the daily experience for a frontline representative?

Scaling without an orchestration layer is like trying to run a symphony orchestra without a conductor; every instrument might be playing well, but the result is just noise. The operational risk is a fragmented customer journey where a customer receives conflicting information from a chatbot and a follow-up email draft. A centralized system changes the agent’s daily life by providing a unified dashboard where they can see the “reasoning” behind AI actions across all channels. Instead of hunting through different tools to see why a ticket was routed a certain way, the agent spends their energy on high-value empathy and complex problem-solving.

What is your forecast for agentic AI in customer service?

I anticipate a significant shift toward “Super-Agents” who manage fleets of specialized AI bots, moving the human role from execution to high-level oversight. Within the next few years, the 81% of teams currently using disconnected tools will either integrate them or be outpaced by competitors who can offer seamless, 24/7 autonomous support. We will see the “efficiency paradox” resolve as orchestration layers become the industry standard, finally allowing the human workload to drop in proportion to AI’s rising capabilities. Ultimately, the future of CX isn’t about replacing humans, but about creating a sophisticated partnership where technology handles the logic and humans provide the soul.